Gemini 3.1 Deep Think (2026): Google’s Ultimate Logic AI!

⚡ The Stat-Shock Hook:

Standard AI models have officially hit a mathematical ceiling, but Google just shattered it. In February 2026, the Gemini 3.1 Deep Think engine scored an unprecedented 84.6% on the ARC-AGI-2 benchmark—obliterating competitors and fundamentally changing how developers use “System 2” logic for complex engineering, debugging, and data science.

The “System 1” Hallucination Problem

To understand the magnitude of this review, we must establish historical context. As documented by Wikipedia’s archives on dual-process theory, AI development up until 2024 was entirely focused on “System 1” thinking. Large Language Models (LLMs) predicted the next most likely word instantly based on pattern matching. This made them fast, but mathematically illiterate. If you asked an AI a novel logic puzzle, it would confidently hallucinate an answer.

The paradigm shifted in late 2024 when the industry introduced “test-time compute,” allowing models to generate hidden chains of thought before responding. By February 11, 2026, Google DeepMind formally introduced Gemini 3 Deep Think to advance science and research. Just a week later, they rolled this technology into the developer ecosystem with Gemini 3.1 Pro. We are now evaluating an AI that actively pauses, writes code to test its own hypotheses, and self-corrects its logic before ever presenting the user with an answer.

Benchmark Dominance & The 3-Tier Slider

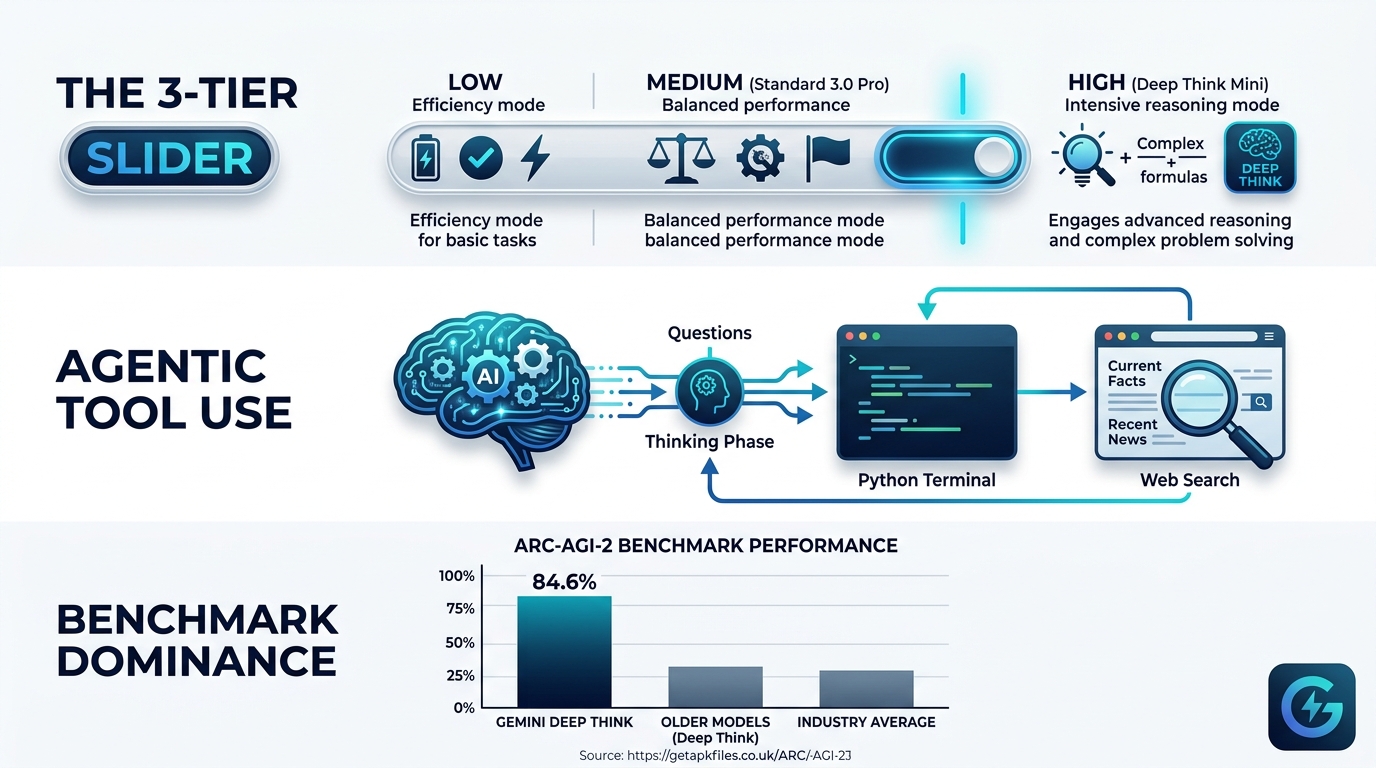

The empirical data from the February 2026 release is staggering. Deep Think isn’t just slightly better; it represents a generational leap in AI chatbots. According to DeepMind’s technical whitepapers, the dedicated Deep Think model achieved 84.6% on the ARC-AGI-2 benchmark (abstract reasoning puzzles), vastly outperforming OpenAI’s GPT-5.2 Thinking model (52.9%). It also secured 53.4% on the notoriously difficult “Humanity’s Last Exam” when allowed to use search and code execution tools.

However, deep reasoning requires massive compute overhead. Google solved this latency issue by deprecating separate reasoning models in favor of a unified endpoint. As documented by API implementation specialists at APIYI, developers now control a 3-tier “Thinking Level” slider:

- Low (1,024 Budget Tokens): Fast, System 1 responses for basic translation or summarization.

- Medium (8,192 Budget Tokens): Balanced reasoning, equivalent to the baseline intelligence of Gemini 3 Pro. Ideal for standard code reviews.

- High (32,768 Budget Tokens): Activates the Deep Think Mini engine. Utilized for mathematical proofs, complex algorithm design, and debugging deep structural errors.

Agentic Workflows and Multimodal Synthesis

What truly separates this model from its competitors is its ability to reason across multiple modalities. While other reasoning models are strictly text-based, Gemini 3.1 Pro seamlessly integrates a 1-million token context window with native support for text, images, audio, and video. You can upload an hour-long video recording of a mechanical system failing, and Deep Think will analyze the spatial geometry to hypothesize the point of failure.

Furthermore, Deep Think employs Agentic Tool Use. During its hidden thinking phase, the AI can recognize when it lacks specific real-time variables. It will autonomously execute Google Searches or write and run Python scripts in a sandbox to retrieve the missing data. This iterative cycle of hypothesis, testing, and self-correction is what elevates its performance on benchmarks like Humanity’s Last Exam.

Real-World Enterprise Applications

There is a common misconception on forums that System 2 reasoning is only useful for PhD-level mathematics. Our expert review strongly disagrees. The practical applications for enterprise software engineers and data scientists are profound.

According to DeepMind’s case studies, engineers at Google’s Platforms and Devices division use Deep Think to accelerate the prototyping of complex physical components. In the software realm, developers feed massive, multi-file legacy codebases into the 1-million token context window, using the “High” thinking tier to safely refactor code without breaking dependent microservices. Logistics managers are utilizing the engine to dynamically evaluate and optimize global supply chains amidst messy, incomplete tracking data.

Comparative Review: Deep Think vs. GPT-5.2 Thinking

How does Google’s logic engine compare to the current industry standard in 2026?

| Metric / Feature | Gemini 3.1 Deep Think (Feb 2026) | GPT-5.2 Thinking (xhigh) |

|---|---|---|

| ARC-AGI-2 Benchmark | 84.6% | 52.9% |

| Multimodal Reasoning | Native integration (Text, Image, Audio, Video). | Primarily text-based reasoning paths. |

| Context Window | 1 Million Tokens (Pointwise retrieval: 26.3%). | Limited to smaller context buffers during deep logic. |

| Agentic Tooling | Native Search + Code Execution during thinking. | Requires external scaffolding for tool use. |

Our assessment clearly indicates that Google has taken the lead in raw abstract reasoning. The integration of “Thought Signatures,” which maintain reasoning continuity across multiple API calls, positions Gemini 3.1 Pro as the superior foundational model for autonomous AI agents.

Expert Multimedia Benchmarks

Deep Think Benchmark Testing: A comprehensive teardown of the February 2026 benchmarks, demonstrating how Deep Think utilizes parallel hypothesis generation to solve complex problems.

Deep Think Real-World Workflows: An excellent guide mapping out the specific syntax required to activate the Deep Think Mini engine via the API for data science applications.

Deploy Enterprise-Grade AI Today

To harness the full power of the 1-Million Token context window and the “High” thinking slider, you will need a robust API environment optimized for long-latency requests. Explore developer credits and scale your autonomous agents securely.

Access Google AI for Developers Disclosure: This post contains affiliate links. We may earn a commission at no extra cost to you.Deep Dive: API Integration Resources

Review our curated technical resources to master the implementation of Gemini 3.1 Pro’s thinking tokens.

Final Expert Verdict: The Future of Autonomous Agents

Our technical assessment of Gemini 3.1 Deep Think concludes that Google has successfully solved the “System 1” hallucination problem. By introducing a dynamic 3-tier thinking slider, developers can now balance compute costs while accessing unprecedented mathematical and logical reasoning power on demand.

With its ability to natively process multimodal inputs and utilize agentic tools during the thinking phase, Deep Think is not just a chatbot feature. It is a foundational engine for the next generation of autonomous enterprise software. If you are a developer attempting to scale complex workflows, migrating to the Gemini 3.1 Pro API is essential.

Download Gemini 3.1 Deep Think: Google’s Ultimate Logic AI! APK

Safe, verified, and scanned for viruses.

Leave a Reply